Inside Today's Meteor

- Disrupt: Philosophy on a Deadline

- Create: Cool AI Art NFTs on the Block

- Compress: In breakthrough, scientists use GPT AI to passively read people's thoughts using fMRI.

- Cool Tools: AgentGPT evaluates sub-actions and completes tasks accordingly to effectively answer the queries of its users.

Philosophy on Deadline

The title of this issue (above) is how AI ethics researcher Connor Leahy describes the problem of developing guard rails for a super intelligent artificial general intelligence.

Leahy is the founder and CEO Conjecture, which he created in 2022 after he reverse engineered OpenAI's GPT-2 large language model, and got worried. He's since secured backing from some serious people to try to "bound-limit" AI, meaning prevent it from taking off into a singularity event. On board are GitHub’s former CEO Nat Friedman, a former machine learning director at Apple, Daniel Gross, Tesla’s former head of AI Andrej Karpathy (who also worked as a researcher at DeepMind and OpenAI) and Stripe founders Patrick and John Collison.

Philosophy on a deadline.

I like this phrase, and not just because I studied philosophy, of which ethics is a branch, or that we urgently need to create some rational and well founded rules about how we develop and launch AI products.

I like it because AI poses unique problems of prediction and control that will complicate what already promises to be a difficult process of creating regulations and laws to keep it safe while maximizing potential benefits. Solutions that work are as likely to be non-intuitive and indirect as not.

Regulators aren't waiting for the philosophers.

China has already weighed in, passing a law in April aimed at generative AI tools, such as ChatGPT, banning AI that might "undermine national unity" or "split the country."

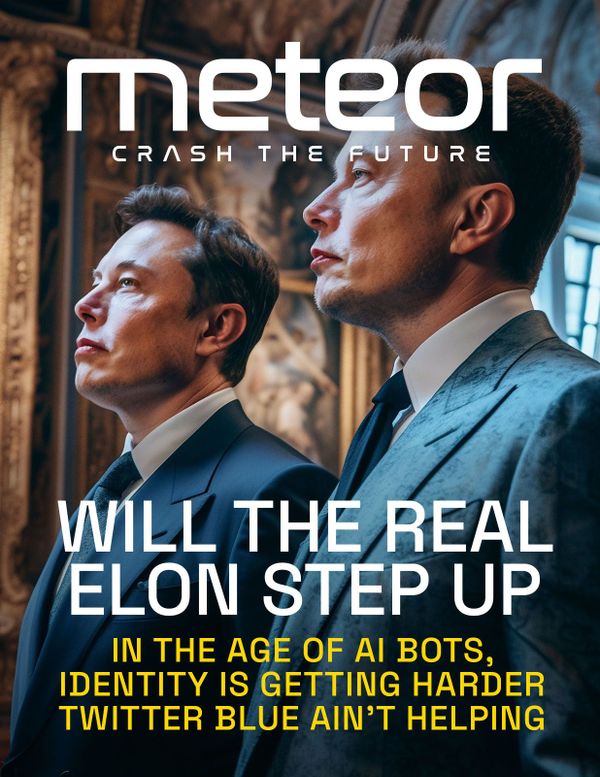

The US White House has drafted an AI Bill of Rights, and Elon Musk last week met with US lawmakers to discuss regulating AI. Musk himself has endorsed a call for a 6-month moratorium on launching new large language models, the AI behind popular services like chatbots and text to image generators.

Most significantly, the EU Parliament last week agreed on draft legislation that will go to committee vote by May 11, and then on towards ratification. If member states agree, which is expected, it will likely become the first significant AI law with broad influence, thanks to the so-called Brussels Effect, particularly for AI applications that interact with humans and systems that are interconnected over the Internet.

The purpose of the law is double, both to protect consumers but also foster innovation and help Europe become a major AI hub (it currently is not). As a result, the EU is actually taking a relatively light touch for now, which has some people calling for a tougher stance.

The draft identifies and attempts to ban a small set of prohibited AI uses, including subliminal techniques, targeting of vulnerable groups, manipulative or exploitative practices, AI-based social scoring by public authorities, and most uses of real time public biometric identification systems in law enforcement.

It also creates a process for self-certification and government oversight of many categories of "high-risk" AI systems, and imposes transparency requirements for AI systems that interact with people.

In its current form, many AI applications may be unaffected.

Ironically, generative AI that's driving much of the political panic from the 'Regulate Now!' faction appears to fall into the last category, although a recent provision was added requiring companies that create such tools to disclose whether they used copyrighted material in developing their models. It feels tacked on to a proposal that first began taking shape over two years ago, long before ChatGPT, Stable Diffusion and MidJourney made their debut.

All of this seems very tactical and gets us nowhere close to philosophy, or even a real "deadline." To understand this part, we need to look at what comes next with enforcement.

We have noted before the strange emergent properties of AI systems, which can behave unpredictably and may even be said to exhibit autonomous behavior.

In regard to self certification, where companies are asked to test and guarantee that their creations will avoid harmful actions, the reality is this may not be possible to achieve with 100 percent accuracy, simply because of the ways AI works.

Take China's AI law, which attempts to prevent AI chatbots from generating certain kinds of political speech, such as any mention of the Tiananmen Square massacre.

While companies can use a variety of techniques to reduce the odds against producing such a gaffe, it's unlikely to be able to reduce it to zero using a model trained on Internet data. Using various adversarial methods, a motivated person might always be able to elicit the response anyway, and there may be no way to prove in advance that such an attack is not possible.

In crafting the law, China has recognized that the responsibility for making AI safe may not be entirely on the people creating it, but also the people who are using it. As a result they've made it illegal to jailbreak AI, and require anyone using AI tools to verify their identity.

Should our right to access AI tools require a government issued license, like owning a gun, driving a car or flying an airplane?

Such properties will only become more pronounced as the capabilities of AI increase towards a true Artificial General Intelligence. While necessary, regulation alone may not be sufficient to ensure a safe and aligned human centric AI future.

Another radical approach is gaining traction among advocates of open source AI, in which models are released publicly to allow more people to research how they work and develop mitigations. In this view, the risks of AI falling into the hands of bad actors is less than the risks of leaving AI in control of a handful of mega-rich corporations and governments. It's basically the opposite of regulation.

Time to bring in the philosophers.

Create

"The Company" by post photography artist Andrea Ciulu.

"Eve of the Revolution #1" by Photogurapher available on secondary at SuperRare.

"Mother" by Garis Edelweiss is available on SuperRare.

Compress

Mind Reading? Mind Reading

In breakthrough, scientists use GPT AI to passively read people's thoughts using fMRI.

Polygon Is on a Streak

Search giant Google signs partnership with Polygon Labs to offer its cloud services to support the Ethereum side chain.

Quitting to Speak His Mind

Geoffry Hinton, the godfather of AI, leaves Google and warns of dangers of the technology he helped create.

Cool Tools

Assemble, configure, and deploy autonomous AI Agents in your browser

Currently in its beta stage, AgentGPT evaluates sub-actions and completes tasks accordingly to effectively answer the queries of its users.